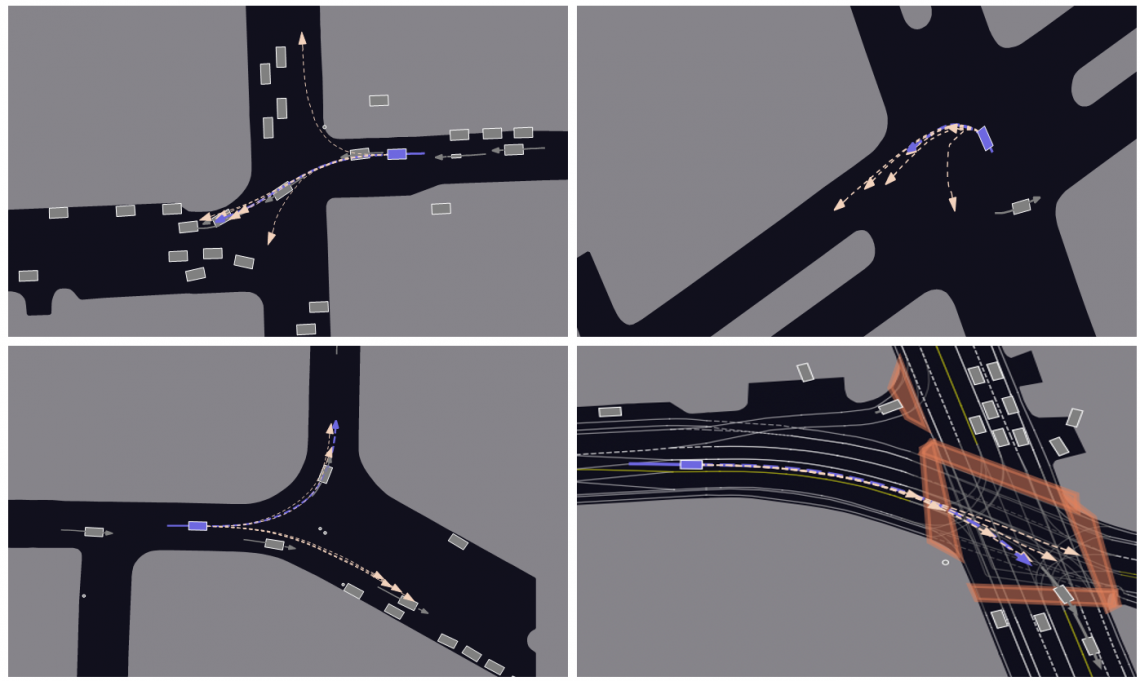

QCNet can capture the intentions of road users, accurately predicting multiple possible movements of surrounding vehicles. (Credit: Professor Wang’s research group / City University of Hong Kong)

Professor Wang Jianping, in the Department of Computer Science (CS) at CityU, who led the study, explained the critical importance of precise, real-time prediction in autonomous driving, highlighting that even minimal delays and errors can lead to catastrophic accidents.

However, existing solutions for behaviour prediction often struggle to correctly understand driving scenarios or lack efficiency in their predictions. These solutions usually involve re-normalising and re-encoding the latest positional data of surrounding objects and the environment whenever the vehicle and its observation window move forward, even though the latest position data substantially overlaps the preceding data. This leads to redundant computations and latency in real-time online predictions.

To overcome these limitations, Professor Wang and her team presented a breakthrough trajectory prediction model, called “QCNet”, which can theoretically support streaming processing. It is based on the principle of relative spacetime for positioning, which gives the prediction model excellent properties, such as the “roto-translation invariance in the space dimension” and “translation invariance in the time dimension”.

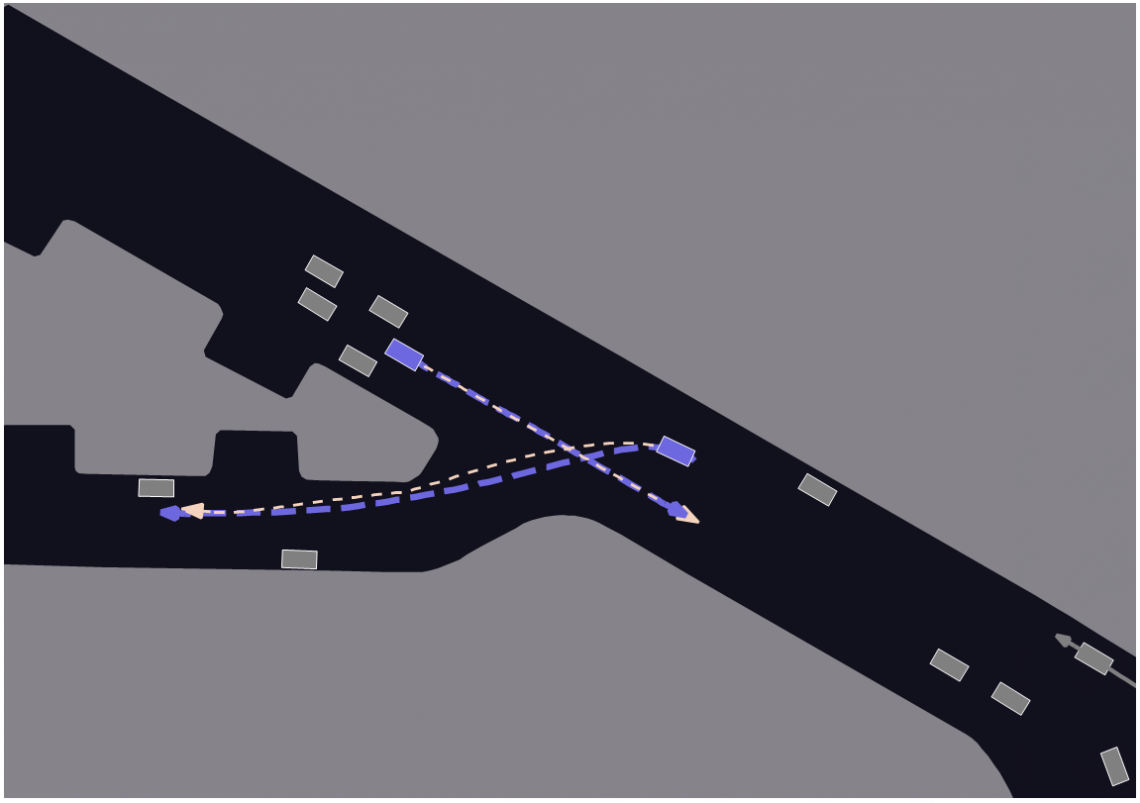

QCNet can understand the rules of the road and the interactions among multiple road users, predicting map-compliant and collision-free future trajectories. (Credit: Professor Wang’s research group / City University of Hong Kong)

These two properties enable the position information extracted from a driving scenario to be unique and fixed, regardless of the viewer’s space-time coordinate system when viewing the driving scenario. This approach allows for caching and reusing previously computed encodings of the coordinates, enabling the prediction model to theoretically operate in real time.

The team also incorporated the relative positions of road users, lanes and crosswalks into the AI model to capture their relationships and interactions in driving scenarios. This enhanced understanding of the rules of the road and the interactions among multiple road users enables the model to generate collision-free predictions while accounting for uncertainty in the future behaviour of road users.

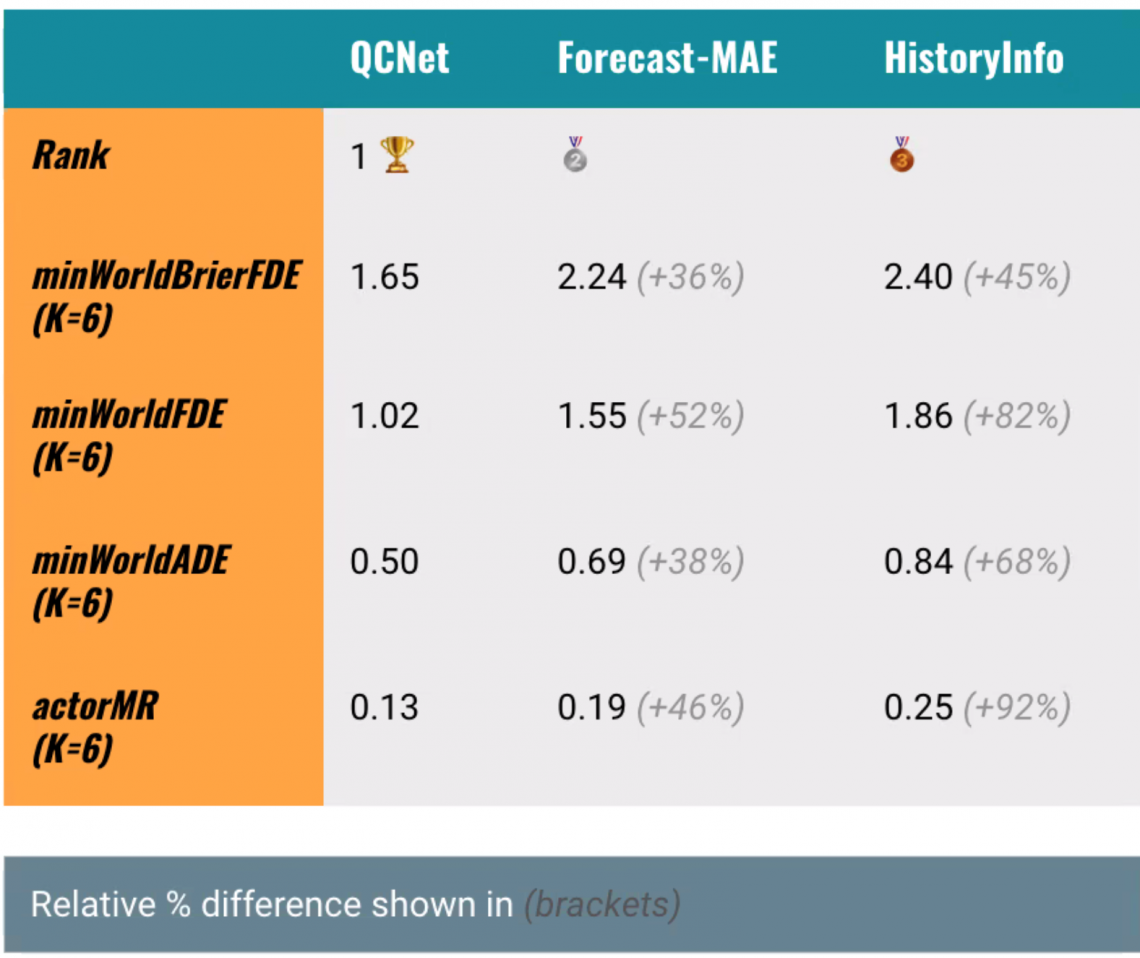

QCNet achieved the best performance among the approaches on Argoverse 1and Argoverse 2, and won the championship in Argoverse 2’s Multi-Agent Motion Forecasting Competition at CVPR 2023. (Credit: Professor Wang’s research group / City University of Hong Kong)

To evaluate the efficacy of QCNet, the team utilised “Argoverse 1” and “Argoverse 2”, two large-scale collections of open-source autonomous driving data and high-definition maps from different U.S. cities. These datasets are considered the most challenging benchmarks for behaviour prediction, comprising over 320,000 sequences of data and 250,000 scenarios.

In testing, QCNet demonstrated both speed and accuracy in predicting road users’ future movements, even with a long-term prediction of up to six seconds. It ranked first among 333 prediction approaches on Argoverse 1 and 44 approaches on Argoverse 2. Moreover, QCNet significantly reduced online inference latency from 8ms to 1ms, and increased the efficiency by over 85% in the densest traffic scene involving 190 road users and 169 map polygons, such as lanes and crosswalks.

“By integrating this technology into autonomous driving systems, the autonomous vehicles can effectively understand their surroundings, predict the future behaviour of other users more accurately, and make safer and more human-like decisions, paving the way for safe autonomous driving,” said Professor Wang. “We plan to apply this technology to more applications in autonomous driving, including traffic simulations and human-like decision-making.”

The research findings were presented at the “IEEE / CVF Computer Vision and Pattern Recognition Conference” (CVPR 2023), an influential annual academic conference in computer vision, held in Canada this year, under the title “Query-Centric Trajectory Prediction”.

Professor Wang Jianping (right) and Mr Zhou Zikang (left). (Credit: Professor Wang’s research group / City University of Hong Kong)

The first author is Mr Zhou Zikang, a PhD student in Professor Wang’s research group in the Department of CS at CityU. The corresponding author is Professor Wang. Also contributing to the research were collaborators from the Hon Hai Research Institute, a research centre established by Hon Hai Technology Group (Foxconn®), and Carnegie Mellon University, in the U.S. The findings will be integrated into Hon Hai Technology Group’s autonomous driving system to enhance real-time prediction efficiency and self-driving safety.

The research was supported by various funding sources, including Hon Hai Research Institute, the Hong Kong Research Grant Council and the Shenzhen Science and Technology Innovation Commission.