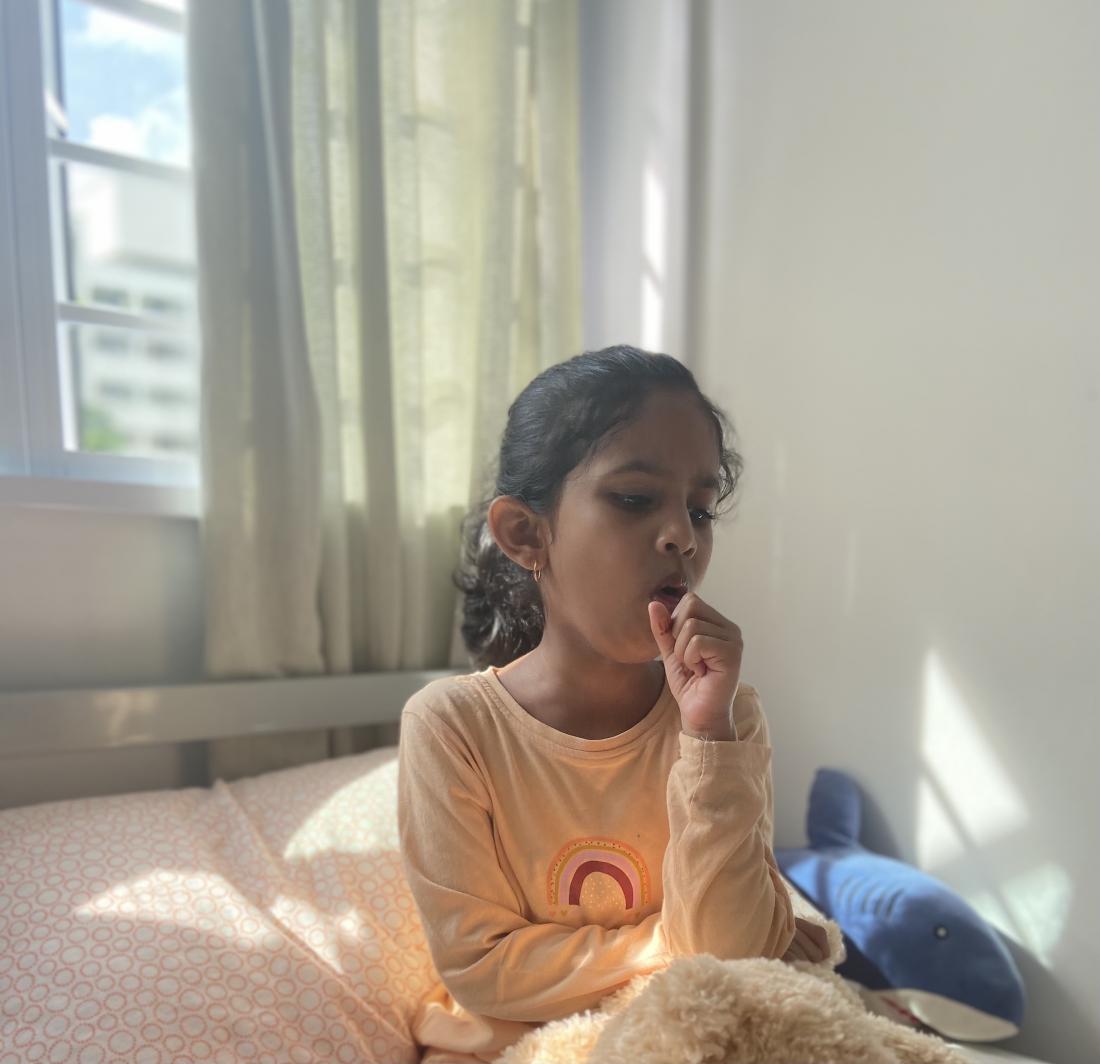

Bidirectional Long-Short-Term Memory can use cough sounds to distinguish sick from healthy children, paving the way for preliminary screening.

Researchers from the Singapore University of Technology and Design (SUTD) have shown that deep learning models can accurately distinguish between healthy and sick children using only their cough sounds. These findings, published in the journal Sensors, could open the doors to more efficient screening for respiratory diseases in kids—and may lift a huge burden off of patients, parents and physicians alike.

In children, cough can be a sign of several respiratory illnesses, including asthma, rhinosinusitis and infections of the respiratory tract. The ubiquity of cough as a symptom means that doctors often have to conduct additional tests and procedures in order to deliver a definite diagnosis.

“These tests require hospital visits, are not without risks to the child and place demands on healthcare resources,” said Assistant Professor Chen Jer-Ming from SUTD, who led the study. “Moreover, such visits have other negative social or economic impacts on the child and his or her family, such as time away from work and requiring specific childcare arrangements.”

The need to ease this burden on patients and as well as the overall healthcare system has led to a growing interest in harnessing minute differences in cough sounds to distinguish one respiratory condition from another. However, most studies have relied on cough audio carefully recorded in recording studio settings, making them unsuitable for real-world applications, where background noise and low-grade equipment could compromise the quality of the recorded coughs.

To address this problem, Asst Prof Chen and collaborator Dr Hee Hwan Ing from KK Women’s and Children’s Hospital and Duke-NUS Medical School used cough recordings collected with smartphones in a live hospital setting, to reflect true ‘ecological’ conditions. Next, to help them accurately classify the cough recordings as diseased or healthy, the team turned to a specific type of deep neural network model called Bidirectional Long-Short-Term Memory (BiLSTM).

Compared with other artificial neural networks, BiLSTMs are made up of individual units that can remember values over an arbitrary amount of time. Such a memory mechanism, Asst Prof Chen explained, makes BiLSTMs particularly suited to handle sequential data like audio.

To train and test their model, the team used cough recordings from 89 children with asthma, 160 with lower respiratory tract infection and 78 with upper respiratory tract infection. For comparison, they also included cough sounds from 89 healthy children.

The team found that BiLSTM could accurately classify individual cough sounds as healthy or diseased 84.5 percent of the time. When considering all of a patient’s audio samples, the predictive model had an accuracy rating of 91.2 percent. This means that out of 10 patients that provide their cough recordings, BiLSTM will be able to correctly identify nine as either healthy or sick.

However, when trying to distinguish among the different pathological coughs, the model was less accurate. For instance, the model misattributed nearly three-fourths of asthma coughs to infections of the respiratory tract. In turn, more than 60 percent of children with asthma were wrongly diagnosed with lower respiratory tract infection.

“Analysing the audio features of unhealthy versus healthy, recovered coughs collected from the same child revealed the fact that unhealthy coughs, irrespective of the underlying conditions, are much more similar with other unhealthy coughs,” Asst Prof Chen pointed out. Such an observation is in line with anecdotal records that even doctors themselves find it difficult to distinguish diseases based on cough sounds alone.

Nevertheless, the researchers found that much of the model’s misclassification occurred when attempting to distinguish different diseases; BiLSTM remained highly accurate at differentiating healthy from sick kids.

Despite its potential to transform respiratory disease screening in the paediatric department, there’s still work to do before the present BiLSTM model will be ready for clinical deployment.

In particular, the development of a smartphone app that can collect audio input, forward it to a central server for processing and display results to the end-user, will be key in making the model usable in the clinical setting. Once deployed, the technique can then be continuously refined using further audio data from patients, to improve its accuracy at detecting pathological coughs.

“This study is only the first step towards developing a highly efficient deep neural network model that can differentiate between different unhealthy cough sounds,” Asst Prof Chen said. “Such an automated, ‘in the field’ approach will support clinical screening of respiratory illnesses associated with cough, contributing to health monitoring and screening, especially in remote and developing communities.”