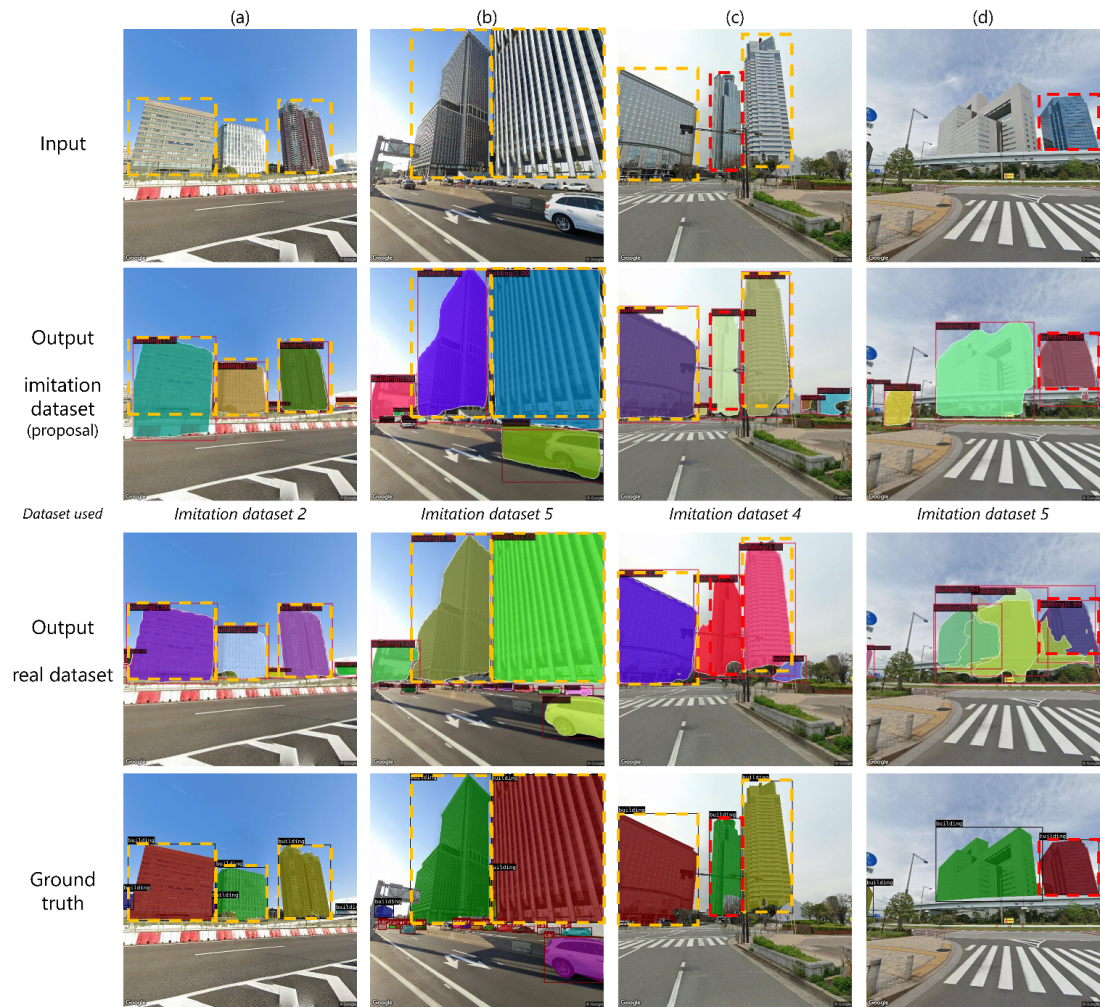

Comparison of the detection accuracy of models trained on datasets generated by the proposed method and models trained on real images. It is possible to use the proposed method to achieve similar or superior results to the model trained on real images. The areas where the model trained on generated images obtained better results than the model trained on real images are indicated by the red dashed lines.

Researchers from Osaka University develop a way to train data-hungry models to accurately assess images of urban landscapes, without the need for real models or images of actual cities

Osaka, Japan – Recent advances in artificial intelligence and deep learning have revolutionized many industries, and might soon help recreate your neighborhood as well. Given images of a landscape, the analysis of deep-learning models can help urban landscapers visualize plans for redevelopment, thereby improving scenery or preventing costly mistakes.

To accomplish this, however, models must be able to correctly identify and categorize each element in a given image. This step, called instance segmentation, remains challenging for machines owing to a lack of suitable training data. Although it is relatively easy to collect images of a city, generating the ‘ground truth’, that is, the labels that tell the model if its segmentation is correct, involves painstakingly segmenting each image, often by hand.

Now, to address this problem, researchers at Osaka University have developed a way to train these data-hungry models using computer simulation. First, a realistic 3D city model is used to generate the segmentation ground truth. Then, an image-to-image model generates photorealistic images from the ground truth images. The result is a dataset of realistic images similar to those of an actual city, complete with precisely generated ground-truth labels that do not require manual segmentation.

“Synthetic data have been used in deep learning before,” says lead author Takuya Kikuchi. “But most landscape systems rely on 3D models of existing cities, which remain hard to build. We also simulate the city structure, but we do it in a way that still generates effective training data for models in the real world.”

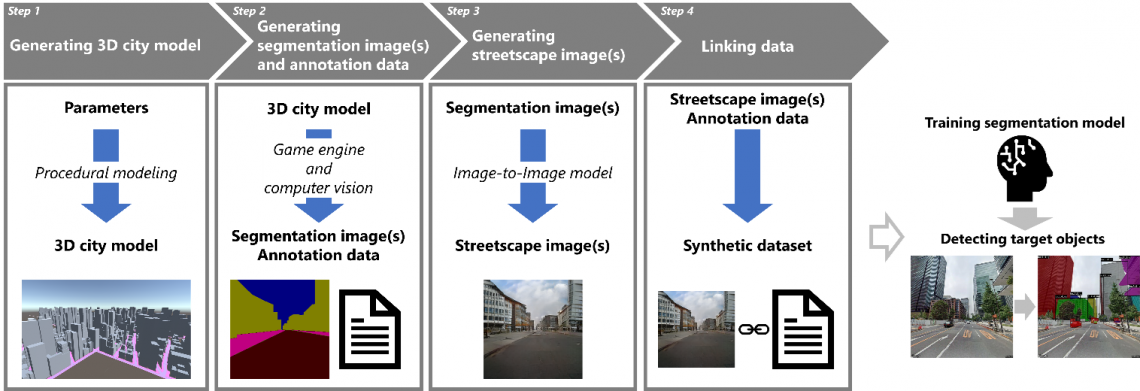

Fig. 1

Overview of the proposed method. A 3D city model is automatically generated according to the specified parameters using procedural modeling, and annotation data and training images are generated from the 3D city model using a game engine and an image translation technique, respectively.

After the 3D model of a realistic city is generated procedurally, segmentation images of the city are created with a game engine. Finally, a generative adversarial network, which is a neural network that uses game theory to learn how to generate realistic-looking images, is trained to convert images of shapes into images with realistic city textures This image-to-image model creates the corresponding street-view images.

“This removes the need for datasets of real buildings, which are not publicly available. Moreover, several individual objects can be separated, even if they overlap in the image,” explains corresponding author Tomohiro Fukuda. “But most importantly, this approach saves human effort, and the costs associated with that, while still generating good training data.”

To prove this, a segmentation model called a ‘mask region-based convolutional neural network’ was trained on the simulated data and another was trained on real data. The models performed similarly on instances of large, distinct buildings, even though the time to produce the dataset was reduced by 98%.

The researchers plan to see if improvements to the image-to-image model increase performance under more conditions. For now, this approach generates large amounts of data with an impressively low amount of effort. The researchers’ achievement will address current and upcoming shortages of training data, reduce costs associated with dataset preparation and help to usher in a new era of deep learning-assisted urban landscaping.

###

The article, “Development of a synthetic dataset generation method for deep learning of real urban landscapes using a 3D model of a non-existing realistic city,” was published in Advanced Engineering Informatics at DOI: https://doi.org/10.1016/j.aei.2023.102154

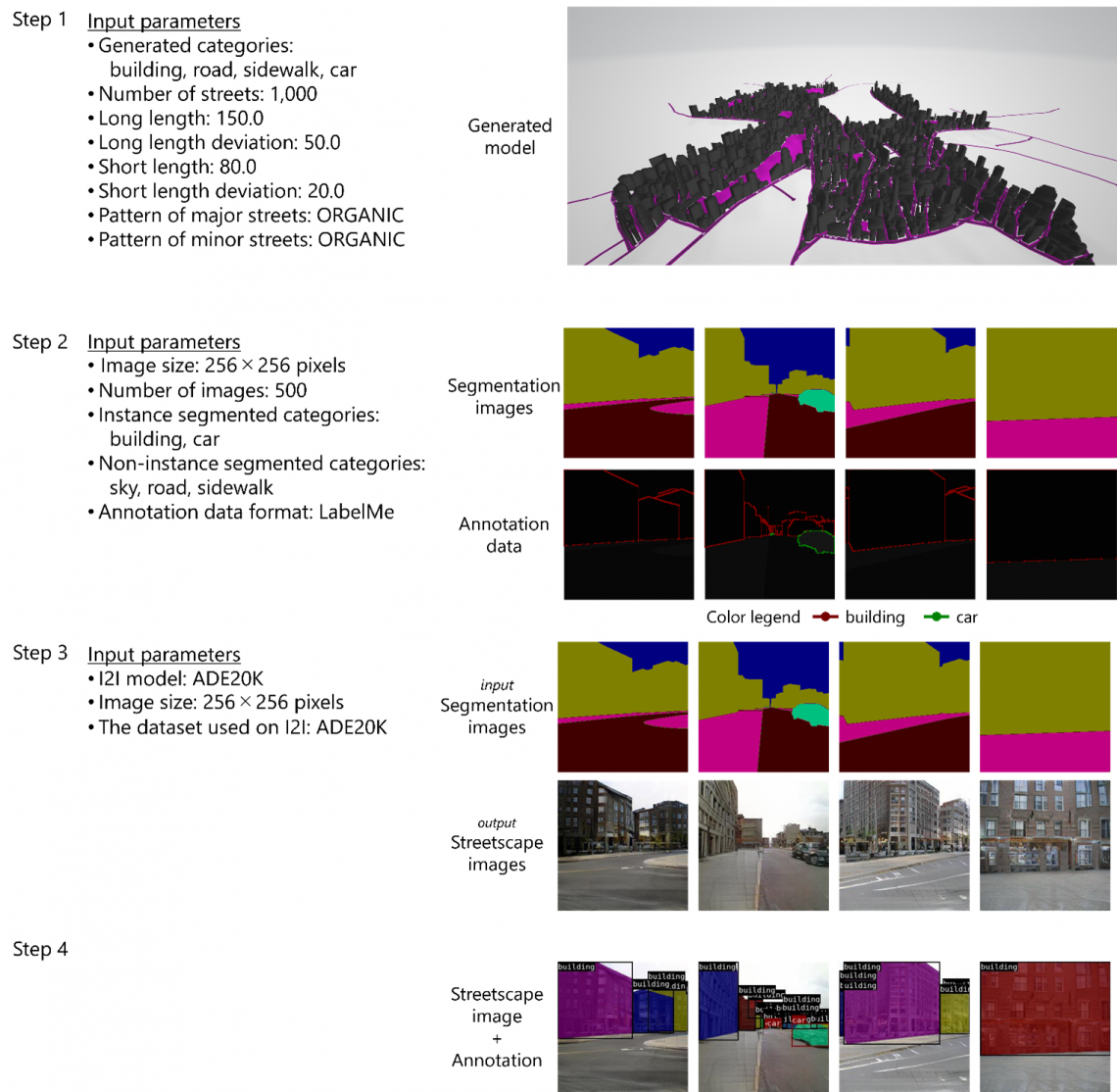

Fig. 2

Data generated at each step using the developed framework. Left figure: parameter settings and other elements used in this study. Right figure: examples of data generated using the parameter settings.

About Osaka University

Osaka University was founded in 1931 as one of the seven imperial universities of Japan and is now one of Japan's leading comprehensive universities with a broad disciplinary spectrum. This strength is coupled with a singular drive for innovation that extends throughout the scientific process, from fundamental research to the creation of applied technology with positive economic impacts. Its commitment to innovation has been recognized in Japan and around the world, being named Japan's most innovative university in 2015 (Reuters 2015 Top 100) and one of the most innovative institutions in the world in 2017 (Innovative Universities and the Nature Index Innovation 2017). Now, Osaka University is leveraging its role as a Designated National University Corporation selected by the Ministry of Education, Culture, Sports, Science and Technology to contribute to innovation for human welfare, sustainable development of society, and social transformation.

Website: https://resou.osaka-u.ac.jp/en